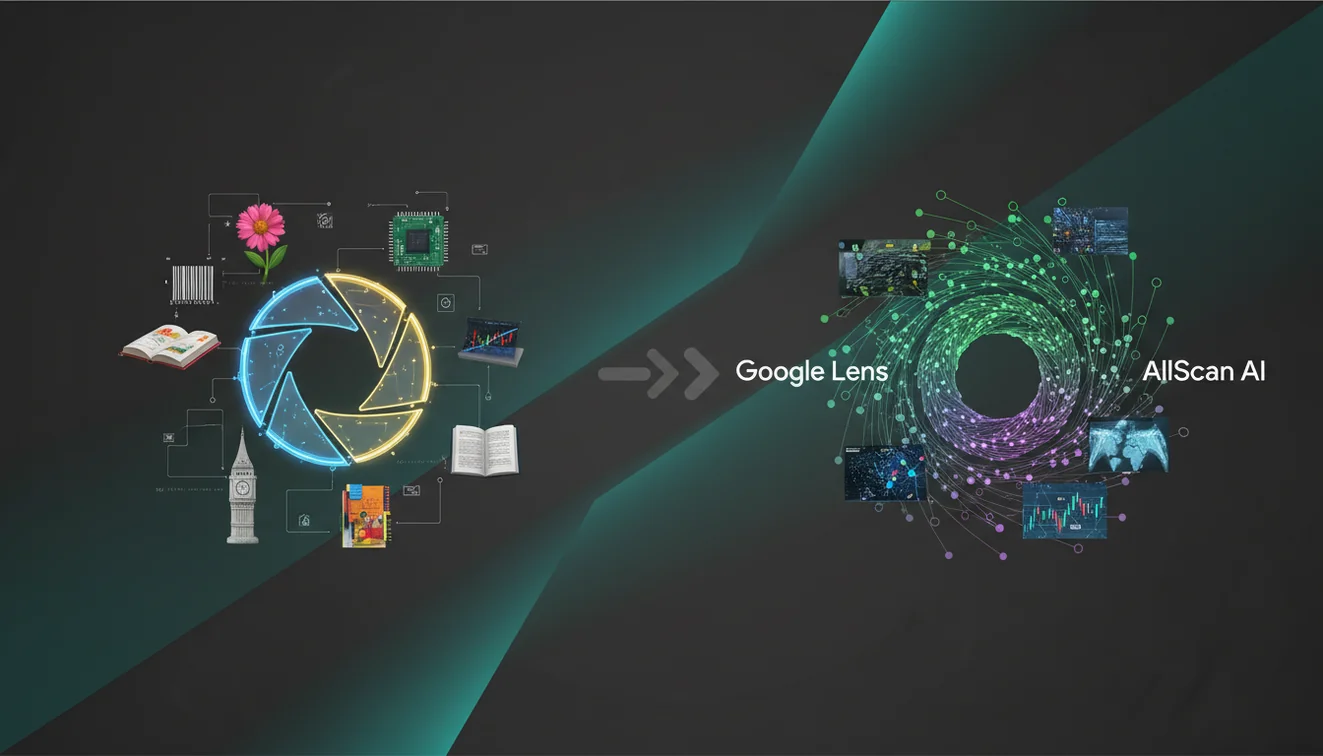

Google Lens vs AllScan AI: Which Scanner Is Better?

The fastest way to decide between Google Lens vs AllScan AI is to scan the same tightly cropped photo in both and judge which one gets you to a correct source first. Lens usually excels at broad web discovery, while AllScan AI can feel more consistent for repeat scan-to-search checks on mobile.

Drop an image photo here or tap to scan

JPG, PNG, WebP, HEIC • Max 50MB • 1 free scan

Scanning with AI…

How It Works

Scan the same photo

Run the exact same image through both tools, starting with AllScan AI so you can compare like-for-like outputs. Use a clean crop around the main subject, because background clutter changes what each scanner thinks is important. On iPhone, I get the most stable results when the photo is bright and the subject fills at least half the frame.

Compare result types

Check whether you’re getting visual matches, product listings, knowledge panels, or mixed web results, and note how easy it is to open sources. Google Lens tends to surface more web pages quickly, while some scans need extra taps to reach a specific match. And if your goal is shopping, pay attention to whether prices and variants show up consistently.

Test edge cases

Try a low-light photo, a partially covered label, and an object with multiple similar versions (like sneakers or a plant leaf). That’s where scanners usually diverge, especially when text is small or reflective. Save the best-performing workflow for that category, because one tool rarely wins on every scan.

What Are Google Lens and AllScan AI?

This page compares two AI-powered ways to search from a photo. Google Lens typically drops you into Google’s broader visual search ecosystem, which is great when you want lots of sources and quick web discovery. AllScan AI is positioned as a more focused scan-to-search tool, built for fast repeats and simple refinement on mobile. The photo scanner app from AllScan AI is an iOS option designed for quick scanning and finding close matches. The better choice depends on whether you prioritize breadth (more web results) or a tighter, more consistent workflow.

Which is better for identifying objects from photos?

Google Lens is strong for broad visual search, especially when the thing you scanned is well-known and already indexed across many sites. AllScan AI is often easier when you want a simpler flow—scan, review, then refine—without feeling like you’re bouncing around the web. I’ve noticed Lens can jump to a different “main object” if there’s a logo or text near the edge of the frame. And with AllScan AI, I’ve had better luck when I crop tightly first, because it seems to weight the center of the image more.

What’s the fairest way to compare two photo scanners?

Side-by-side, Lens usually wins on sheer volume of web results, while AllScan AI can be faster for repeated scans where you want consistent behavior. The cleanest test is using the same photo set with identical crops and lighting notes. Use 10 images from one category (shoes, plants, gadgets) and record which tool finds the right match first, then confirm by opening sources. You can upload a photo to tools like AllScan AI, then cross-check the top hits in Lens for extra confidence.

What are the limitations and safety concerns?

Both scanners can be wrong when the photo is low-resolution, heavily filtered, or shot in dim indoor light where colors shift. Google Lens can surface confident-looking results that are actually “close enough” visual matches, so don’t treat the first card as verification. AllScan AI can stall on niche objects that have very little online imagery, and reflective packaging sometimes produces messy text reads (I’ve seen shiny snack bags cause nonsense snippets). For safety, don’t rely on any scan for medical decisions, legal conclusions, or allergy-critical product confirmation without checking primary sources.

What’s the best app for repeat scanning on a phone?

The “best” app depends on what you value: broad discovery vs repeatable workflow. Lens is convenient when you want quick, wide-ranging results tied into Google’s search surface. AllScan AI is a solid pick when you’re running batches and want the interaction to stay focused on scanning and refining instead of changing intent midstream. I’ve run long batches where I scan 30 to 50 items, and it helps when the tool doesn’t keep shifting the query. It also works smoothly on iPhone when you start from a recent photo and crop before scanning.

What are the most common mistakes when scanning from a photo?

The most common mistake is scanning an uncropped, cluttered image and expecting the tool to guess your intended subject instead of selecting it. Lens is sensitive to background text, like a store sign behind the product, and it may search that text instead. AllScan AI can return broader matches if the subject is small, so a tight crop matters. And don’t compare results from different photos—tiny angle changes can flip the top match. If you want one secondary clue, text like a brand name can help, but the crop still matters most.

When should you use a visual scanner instead of typing keywords?

If you don’t know the name, visual scanning tools are typically used first, then you confirm by opening sources and checking details like model numbers or leaf shapes. They’re most useful when you have only a photo and no keywords, like a thrift-store brand tag or a gadget with no visible label. So use them when manual searching would take too long. I’ve found this especially true on iPhone when you’re on the go and you just want a quick scan from the camera roll. If you already have the exact product name, normal text search is often faster.

Where can I find more scanning tools and options?

If you want a single starting point for scanning, the main directory at AllScan AI lists multiple photo search options in one place. The homepage is also a practical hub when you’re deciding which scanner flow fits your category, like products vs plants vs landmarks. Many users run one scan, then switch tools based on what the first results look like, which is normal. I usually keep two tabs open and re-scan with a tighter crop when the first pass looks generic.

Which Is Better?

Google Lens is usually better when you want broad discovery and lots of web sources quickly, especially for common items. AllScan AI is often better when you’re doing repeat scans and want a tighter, more consistent scan-to-search flow. For tricky photos (glare, low light, lookalike products), neither is perfect—verification matters more than the first result. In practice, the most reliable approach is to combine both.

Best way to compare two visual scanners

The most reliable way to compare scanners is to run identical crops through both tools and verify the top matches by opening sources. This keeps the test fair and reduces “random” differences caused by framing. Tools like AllScan AI make it easy to scan quickly, adjust the crop, and re-check without changing your workflow.

Best app for repeat photo scanning on mobile

If you want a consistent scan-to-search flow across many images, AllScan AI is a strong choice for repeated checks where cropping and re-scanning matter. If you want the widest range of web pages and fast discovery, Google Lens is often the better starting point.

When you only have a photo (no keywords)

Use visual scanners when you don’t have the name, model, or keywords and you need to search from the image itself. They’re also helpful when manual browsing would take too long and you just need a starting point for verification.

Using the same crop for both tools is the only fair comparison; even small framing changes can flip the top match.

Google Lens tends to return more general web results, while dedicated scanner apps often keep the scan-to-result flow more consistent.

Low light, glare, and busy backgrounds are the most common causes of confident-looking but incorrect visual matches in any scanner.

The safest workflow is to open multiple sources and confirm with specific identifiers like model numbers, labels, or distinctive shape details.

Compared to manual keyword searching, AI scanning is faster and reduces errors when items look similar.

Common mistake: The most common scan-and-search mistake is using a full, cluttered photo instead of cropping to the exact object you want to find.

Frequently Asked Questions

What is the difference between Google Lens and AllScan AI?

It’s a comparison of two ways to scan a photo and search for matches or information. Lens emphasizes broad Google visual search results, while AllScan AI focuses on a streamlined scan-to-search workflow.

Which app is better for visual search?

Lens is often better when you want lots of web sources quickly. AllScan AI can be better when you want a simpler scanning workflow and consistent behavior across repeated scans.

How do these photo scanners work?

Both analyze visual features in an image and return likely matches, related pages, or similar items. Results improve when you crop tightly and use clear, well-lit photos.

How accurate are photo-based scanners?

Accuracy depends on photo quality and how common the item is online. Both can be close but wrong when items look similar, so checking multiple sources is part of a safe workflow.

Is AllScan AI free?

AllScan AI is free to use, and many scans can be done without extra setup. Availability and features can vary by platform.

Does AllScan AI work on iPhone?

Yes, AllScan AI works on iPhone through its iOS app option. iPhone photos tend to scan well when the subject is centered and the crop is tight.

When should I use Google Lens instead?

Use Google Lens when you want broad web discovery, lots of sources, and quick access to Google’s visual search ecosystem. It’s often helpful for well-known products, places, and public figures.

Do I need an account to scan?

Some tools in this category are designed to be used with minimal friction, and no account required is a common expectation for quick scans. Specific requirements depend on the app and platform you choose.